[深度学习] 使用深度学习开发的循线小车-程序员宅基地

ubuntu 安装 docker_ubuntu 如何知道已经安装了docker-程序员宅基地

CentOS7的Docker无法拉取镜像_docker查找不到centos7镜像-程序员宅基地

ubuntu 安装 docker_ubuntu 如何知道已经安装了docker-程序员宅基地

【Python】Pytorch分类模型转onnx以及onnx模型推理-程序员宅基地

OriginBot智能机器人开源套件|23.视觉巡线(AI深度学习) - 知乎

ubuntu22.04新机配置深度学习环境(一遍成) - 知乎

完成Docker环境安装后,需要将无root权限的用户添加到Docker用户组中。参考如下命令:

sudo groupadd docker

sudo gpasswd -a ${USER} docker

sudo systemctl restart docker # CentOS7/Ubuntu

# re-login模型训练

以上提到的模型可以直接复用pytorch中的定义,数据集的切分和模型的训练,都封装在 line_follower_model 功能包的代码中。

接下来,运行如下指令,开始训练:

cd ~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model

ros2 run line_follower_model training报错: ./best_line_follower_model_xy.pth cannot be opened

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model$ ros2 run line_follower_model training

/home/thomas/.local/lib/python3.10/site-packages/torchvision/models/_utils.py:208: UserWarning: The parameter 'pretrained' is deprecated since 0.13 and may be removed in the future, please use 'weights' instead.

warnings.warn(

/home/thomas/.local/lib/python3.10/site-packages/torchvision/models/_utils.py:223: UserWarning: Arguments other than a weight enum or `None` for 'weights' are deprecated since 0.13 and may be removed in the future. The current behavior is equivalent to passing `weights=ResNet18_Weights.IMAGENET1K_V1`. You can also use `weights=ResNet18_Weights.DEFAULT` to get the most up-to-date weights.

warnings.warn(msg)

Downloading: "https://download.pytorch.org/models/resnet18-f37072fd.pth" to /home/thomas/.cache/torch/hub/checkpoints/resnet18-f37072fd.pth

100.0%

0.672721, 30.660010

save

Traceback (most recent call last):

File "/home/thomas/dev_ws/install/line_follower_model/lib/line_follower_model/training", line 33, in <module>

sys.exit(load_entry_point('line-follower-model==0.0.0', 'console_scripts', 'training')())

File "/home/thomas/dev_ws/install/line_follower_model/lib/python3.10/site-packages/line_follower_model/training_member_function.py", line 131, in main

torch.save(model.state_dict(), BEST_MODEL_PATH)

File "/home/thomas/.local/lib/python3.10/site-packages/torch/serialization.py", line 628, in save

with _open_zipfile_writer(f) as opened_zipfile:

File "/home/thomas/.local/lib/python3.10/site-packages/torch/serialization.py", line 502, in _open_zipfile_writer

return container(name_or_buffer)

File "/home/thomas/.local/lib/python3.10/site-packages/torch/serialization.py", line 473, in __init__

super().__init__(torch._C.PyTorchFileWriter(self.name))

RuntimeError: File ./best_line_follower_model_xy.pth cannot be opened.

这是由于没有文件夹的写权限

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning$ ls -l

total 8

drwxr-xr-x 3 root root 4096 Mar 27 11:03 10_model_convert

drwxr-xr-x 7 root root 4096 Mar 27 14:29 line_follower_model

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning$ sudo chmod 777 *

[sudo] password for thomas:

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning$ ls

10_model_convert line_follower_model

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning$ ls -l

total 8

drwxrwxrwx 3 root root 4096 Mar 27 11:03 10_model_convert

drwxrwxrwx 7 root root 4096 Mar 27 14:29 line_follower_model

再次执行

ros2 run line_follower_model trainingthomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model$ ros2 run line_follower_model training

/home/thomas/.local/lib/python3.10/site-packages/torchvision/models/_utils.py:208: UserWarning: The parameter 'pretrained' is deprecated since 0.13 and may be removed in the future, please use 'weights' instead.

warnings.warn(

/home/thomas/.local/lib/python3.10/site-packages/torchvision/models/_utils.py:223: UserWarning: Arguments other than a weight enum or `None` for 'weights' are deprecated since 0.13 and may be removed in the future. The current behavior is equivalent to passing `weights=ResNet18_Weights.IMAGENET1K_V1`. You can also use `weights=ResNet18_Weights.DEFAULT` to get the most up-to-date weights.

warnings.warn(msg)

0.722548, 6.242182

save

0.087550, 5.827808

save

0.045032, 0.380008

save

0.032235, 0.111976

save

0.027896, 0.039962

save

0.030725, 0.204738

0.025075, 0.036258

save

0.028099, 0.040965

0.016858, 0.032197

save

0.019491, 0.036230

0.018325, 0.043560

0.019858, 0.322563

0.015115, 0.070269

0.014820, 0.030373

模型训练过程需要一段时间,几十分钟或者一个小时,需要耐心等待,完成后可以看到生成的文件 best_line_follower_model_xy.pth

thomas@thomas-J20:~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model$ ls -l

total 54892

-rw-rw-r-- 1 thomas thomas 44789846 Mar 28 13:28 best_line_follower_model_xy.pth

模型转换

pytorch训练得到的浮点模型如果直接运行在RDK X3上效率会很低,为了提高运行效率,发挥BPU的5T算力,这里需要进行浮点模型转定点模型操作。

生成onnx模型

接下来执行 generate_onnx 将之前训练好的模型,转换成 onnx 模型:

ros2 run line_follower_model generate_onnx运行后在当前目录下得到生成 best_line_follower_model_xy.onnx 模型

thomas@J-35:~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model$ ls -l

total 98556

-rw-rw-r-- 1 thomas thomas 44700647 Apr 2 21:02 best_line_follower_model_xy.onnx

-rw-rw-r-- 1 thomas thomas 44789846 Apr 2 19:37 best_line_follower_model_xy.pth

启动AI工具链docker

解压缩之前下载好的AI工具链的docker镜像和OE包,OE包目录结构如下:

.

├── bsp

│ └── X3J3-Img-PL2.2-V1.1.0-20220324.tgz

├── ddk

│ ├── package

│ ├── samples

│ └── tools

├── doc

│ ├── cn

│ ├── ddk_doc

│ └── en

├── release_note-CN.txt

├── release_note-EN.txt

├── run_docker.sh

└── tools

├── 0A_CP210x_USB2UART_Driver.zip

├── 0A_PL2302-USB-to-Serial-Comm-Port.zip

├── 0A_PL2303-M_LogoDriver_Setup_v202_20200527.zip

├── 0B_hbupdate_burn_secure-key1.zip

├── 0B_hbupdate_linux_cli_v1.1.tgz

├── 0B_hbupdate_linux_gui_v1.1.tgz

├── 0B_hbupdate_mac_v1.0.5.app.tar.gz

└── 0B_hbupdate_win64_v1.1.zip

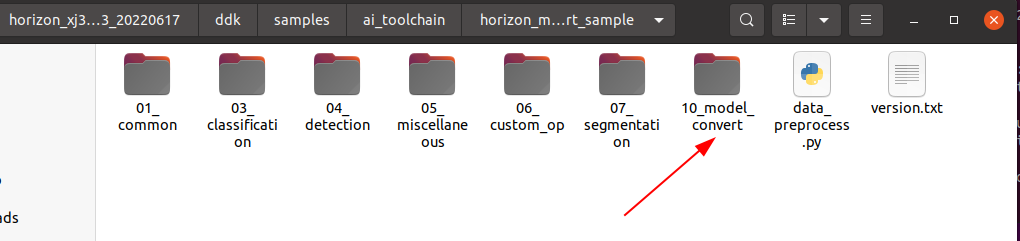

将 originbot_desktop 代码仓库中的 10_model_convert 包拷贝到至OE开发包 ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/ 目录下。

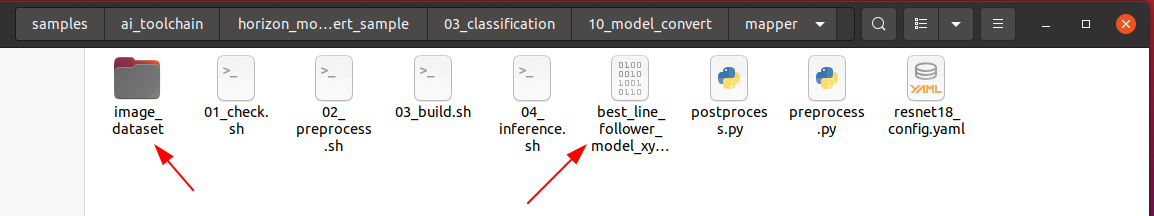

再把 line_follower_model 功能包下标注好的数据集文件夹 image_dataset 和生成的 best_line_follower_model_xy.onnx 模型拷贝到以上 ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/ 目录下,数据集文件夹 image_dataset 保留100张左右的数据用于校准:

然后回到OE包的根目录下,加载AI工具链的docker镜像:

cd /home/thomas/Me/deeplearning/horizon_xj3_open_explorer_v2.3.3_20220727/

sh run_docker.sh /data/

生成校准数据

在启动的Docker镜像中,完成如下操作:

cd ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper

sh 02_preprocess.sh

命令执行过程如下:

thomas@J-35:~/Me/deeplearning/horizon_xj3_open_explorer_v2.3.3_20220727$ sudo sh run_docker.sh /data/

[sudo] password for thomas:

run_docker.sh: 14: [: unexpected operator

run_docker.sh: 23: [: openexplorer/ai_toolchain_centos_7_xj3: unexpected operator

docker version is v2.3.3

dataset path is /data

open_explorer folder path is /home/thomas/Me/deeplearning/horizon_xj3_open_explorer_v2.3.3_20220727

[root@1e1a1a7e24f4 open_explorer]# cd ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper

[root@1e1a1a7e24f4 mapper]# sh 02_preprocess.sh

cd $(dirname $0) || exit

python3 ../../../data_preprocess.py \

--src_dir ./image_dataset \

--dst_dir ./calibration_data_bgr_f32 \

--pic_ext .rgb \

--read_mode opencv

Warning please note that the data type is now determined by the name of the folder suffix

Warning if you need to set it explicitly, please configure the value of saved_data_type in the preprocess shell script

regular preprocess

write:./calibration_data_bgr_f32/xy_008_160_31a8e30a-eca6-11ee-bb07-dfd665df7b81.rgb

write:./calibration_data_bgr_f32/xy_009_160_39c18c40-eca6-11ee-bb07-dfd665df7b81.rgb

write:./calibration_data_bgr_f32/xy_028_092_3327df66-ec9b-11ee-bb07-dfd665df7b81.rgb

模型编译生成定点模型

接下来执行以下命令生成定点模型文件,稍后会在机器人上部署:

cd ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper

sh 03_build.sh命令执行过程如下:

[root@1e1a1a7e24f4 mapper]# sh 03_build.sh

2024-04-02 21:46:50,078 INFO Start hb_mapper....

2024-04-02 21:46:50,079 INFO log will be stored in /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/hb_mapper_makertbin.log

2024-04-02 21:46:50,079 INFO hbdk version 3.37.2

2024-04-02 21:46:50,080 INFO horizon_nn version 0.14.0

2024-04-02 21:46:50,080 INFO hb_mapper version 1.9.9

2024-04-02 21:46:50,081 INFO Start Model Convert....

2024-04-02 21:46:50,100 INFO Using abs path /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/best_line_follower_model_xy.onnx

2024-04-02 21:46:50,102 INFO validating model_parameters...

2024-04-02 21:46:50,231 WARNING User input 'log_level' deleted,Please do not use this parameter again

2024-04-02 21:46:50,231 INFO Using abs path /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/model_output

2024-04-02 21:46:50,232 INFO validating model_parameters finished

2024-04-02 21:46:50,232 INFO validating input_parameters...

2024-04-02 21:46:50,232 INFO input num is set to 1 according to input_names

2024-04-02 21:46:50,233 INFO model name missing, using model name from model file: ['input']

2024-04-02 21:46:50,233 INFO model input shape missing, using shape from model file: [[1, 3, 224, 224]]

2024-04-02 21:46:50,233 INFO validating input_parameters finished

2024-04-02 21:46:50,233 INFO validating calibration_parameters...

2024-04-02 21:46:50,233 INFO Using abs path /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/calibration_data_bgr_f32

2024-04-02 21:46:50,234 INFO validating calibration_parameters finished

2024-04-02 21:46:50,234 INFO validating custom_op...

2024-04-02 21:46:50,234 INFO custom_op does not exist, skipped

2024-04-02 21:46:50,234 INFO validating custom_op finished

2024-04-02 21:46:50,234 INFO validating compiler_parameters...

2024-04-02 21:46:50,235 INFO validating compiler_parameters finished

2024-04-02 21:46:50,239 WARNING Please note that the calibration file data type is set to float32, determined by the name of the calibration dir name suffix

2024-04-02 21:46:50,239 WARNING if you need to set it explicitly, please configure the value of cal_data_type in the calibration_parameters group in yaml

2024-04-02 21:46:50,240 INFO *******************************************

2024-04-02 21:46:50,240 INFO First calibration picture name: xy_008_160_31a8e30a-eca6-11ee-bb07-dfd665df7b81.rgb

2024-04-02 21:46:50,240 INFO First calibration picture md5:

83281dbdee2db08577524faa7f892adf /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/calibration_data_bgr_f32/xy_008_160_31a8e30a-eca6-11ee-bb07-dfd665df7b81.rgb

2024-04-02 21:46:50,265 INFO *******************************************

2024-04-02 21:46:51,682 INFO [Tue Apr 2 21:46:51 2024] Start to Horizon NN Model Convert.

2024-04-02 21:46:51,683 INFO Parsing the input parameter:{'input': {'input_shape': [1, 3, 224, 224], 'expected_input_type': 'YUV444_128', 'original_input_type': 'RGB', 'original_input_layout': 'NCHW', 'means': array([123.675, 116.28 , 103.53 ], dtype=float32), 'scales': array([0.0171248, 0.017507 , 0.0174292], dtype=float32)}}

2024-04-02 21:46:51,684 INFO Parsing the calibration parameter

2024-04-02 21:46:51,684 INFO Parsing the hbdk parameter:{'hbdk_pass_through_params': '--fast --O3', 'input-source': {'input': 'pyramid', '_default_value': 'ddr'}}

2024-04-02 21:46:51,685 INFO HorizonNN version: 0.14.0

2024-04-02 21:46:51,685 INFO HBDK version: 3.37.2

2024-04-02 21:46:51,685 INFO [Tue Apr 2 21:46:51 2024] Start to parse the onnx model.

2024-04-02 21:46:51,770 INFO Input ONNX model infomation:

ONNX IR version: 6

Opset version: 11

Producer: pytorch2.2.2

Domain: none

Input name: input, [1, 3, 224, 224]

Output name: output, [1, 2]

2024-04-02 21:46:52,323 INFO [Tue Apr 2 21:46:52 2024] End to parse the onnx model.

2024-04-02 21:46:52,324 INFO Model input names: ['input']

2024-04-02 21:46:52,324 INFO Create a preprocessing operator for input_name input with means=[123.675 116.28 103.53 ], std=[58.39484253 57.12000948 57.37498298], original_input_layout=NCHW, color convert from 'RGB' to 'YUV_BT601_FULL_RANGE'.

2024-04-02 21:46:52,750 INFO Saving the original float model: resnet18_224x224_nv12_original_float_model.onnx.

2024-04-02 21:46:52,751 INFO [Tue Apr 2 21:46:52 2024] Start to optimize the model.

2024-04-02 21:46:53,782 INFO [Tue Apr 2 21:46:53 2024] End to optimize the model.

2024-04-02 21:46:53,953 INFO Saving the optimized model: resnet18_224x224_nv12_optimized_float_model.onnx.

2024-04-02 21:46:53,953 INFO [Tue Apr 2 21:46:53 2024] Start to calibrate the model.

2024-04-02 21:46:53,954 INFO There are 100 samples in the calibration data set.

2024-04-02 21:46:54,458 INFO Run calibration model with kl method.

2024-04-02 21:47:06,290 INFO [Tue Apr 2 21:47:06 2024] End to calibrate the model.

2024-04-02 21:47:06,291 INFO [Tue Apr 2 21:47:06 2024] Start to quantize the model.

2024-04-02 21:47:09,926 INFO input input is from pyramid. Its layout is set to NHWC

2024-04-02 21:47:10,502 INFO [Tue Apr 2 21:47:10 2024] End to quantize the model.

2024-04-02 21:47:11,101 INFO Saving the quantized model: resnet18_224x224_nv12_quantized_model.onnx.

2024-04-02 21:47:14,165 INFO [Tue Apr 2 21:47:14 2024] Start to compile the model with march bernoulli2.

2024-04-02 21:47:15,502 INFO Compile submodel: main_graph_subgraph_0

2024-04-02 21:47:16,985 INFO hbdk-cc parameters:['--fast', '--O3', '--input-layout', 'NHWC', '--output-layout', 'NHWC', '--input-source', 'pyramid']

2024-04-02 21:47:17,276 INFO INFO: "-j" or "--jobs" is not specified, launch 2 threads for optimization

2024-04-02 21:47:17,277 WARNING missing stride for pyramid input[0], use its aligned width by default.

[==================================================] 100%

2024-04-02 21:47:25,296 INFO consumed time 8.06245

2024-04-02 21:47:25,555 INFO FPS=121.27, latency = 8246.2 us (see main_graph_subgraph_0.html)

2024-04-02 21:47:25,895 INFO [Tue Apr 2 21:47:25 2024] End to compile the model with march bernoulli2.

2024-04-02 21:47:25,896 INFO The converted model node information:

========================================================================================================================================

Node ON Subgraph Type Cosine Similarity Threshold

----------------------------------------------------------------------------------------------------------------------------------------

HZ_PREPROCESS_FOR_input BPU id(0) HzSQuantizedPreprocess 0.999952 127.000000

/conv1/Conv BPU id(0) HzSQuantizedConv 0.999723 3.186383

/maxpool/MaxPool BPU id(0) HzQuantizedMaxPool 0.999790 3.562476

/layer1/layer1.0/conv1/Conv BPU id(0) HzSQuantizedConv 0.999393 3.562476

/layer1/layer1.0/conv2/Conv BPU id(0) HzSQuantizedConv 0.999360 2.320694

/layer1/layer1.1/conv1/Conv BPU id(0) HzSQuantizedConv 0.997865 5.567303

/layer1/layer1.1/conv2/Conv BPU id(0) HzSQuantizedConv 0.998228 2.442273

/layer2/layer2.0/conv1/Conv BPU id(0) HzSQuantizedConv 0.995588 6.622376

/layer2/layer2.0/conv2/Conv BPU id(0) HzSQuantizedConv 0.996943 3.076967

/layer2/layer2.0/downsample/downsample.0/Conv BPU id(0) HzSQuantizedConv 0.997177 6.622376

/layer2/layer2.1/conv1/Conv BPU id(0) HzSQuantizedConv 0.996080 3.934074

/layer2/layer2.1/conv2/Conv BPU id(0) HzSQuantizedConv 0.997443 3.025215

/layer3/layer3.0/conv1/Conv BPU id(0) HzSQuantizedConv 0.998448 4.853349

/layer3/layer3.0/conv2/Conv BPU id(0) HzSQuantizedConv 0.998819 2.553357

/layer3/layer3.0/downsample/downsample.0/Conv BPU id(0) HzSQuantizedConv 0.998717 4.853349

/layer3/layer3.1/conv1/Conv BPU id(0) HzSQuantizedConv 0.998631 3.161120

/layer3/layer3.1/conv2/Conv BPU id(0) HzSQuantizedConv 0.998802 2.501193

/layer4/layer4.0/conv1/Conv BPU id(0) HzSQuantizedConv 0.999474 5.645166

/layer4/layer4.0/conv2/Conv BPU id(0) HzSQuantizedConv 0.999709 2.401657

/layer4/layer4.0/downsample/downsample.0/Conv BPU id(0) HzSQuantizedConv 0.999250 5.645166

/layer4/layer4.1/conv1/Conv BPU id(0) HzSQuantizedConv 0.999808 5.394126

/layer4/layer4.1/conv2/Conv BPU id(0) HzSQuantizedConv 0.999865 3.072157

/avgpool/GlobalAveragePool BPU id(0) HzSQuantizedConv 0.999965 17.365398

/fc/Gemm BPU id(0) HzSQuantizedConv 0.999967 2.144315

/fc/Gemm_NHWC2NCHW_LayoutConvert_Output0_reshape CPU -- Reshape

2024-04-02 21:47:25,897 INFO The quantify model output:

===========================================================================

Node Cosine Similarity L1 Distance L2 Distance Chebyshev Distance

---------------------------------------------------------------------------

/fc/Gemm 0.999967 0.007190 0.005211 0.008810

2024-04-02 21:47:25,898 INFO [Tue Apr 2 21:47:25 2024] End to Horizon NN Model Convert.

2024-04-02 21:47:26,084 INFO start convert to *.bin file....

2024-04-02 21:47:26,183 INFO ONNX model output num : 1

2024-04-02 21:47:26,184 INFO ############# model deps info #############

2024-04-02 21:47:26,185 INFO hb_mapper version : 1.9.9

2024-04-02 21:47:26,185 INFO hbdk version : 3.37.2

2024-04-02 21:47:26,185 INFO hbdk runtime version: 3.14.14

2024-04-02 21:47:26,186 INFO horizon_nn version : 0.14.0

2024-04-02 21:47:26,186 INFO ############# model_parameters info #############

2024-04-02 21:47:26,186 INFO onnx_model : /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/best_line_follower_model_xy.onnx

2024-04-02 21:47:26,186 INFO BPU march : bernoulli2

2024-04-02 21:47:26,187 INFO layer_out_dump : False

2024-04-02 21:47:26,187 INFO log_level : DEBUG

2024-04-02 21:47:26,187 INFO working dir : /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/model_output

2024-04-02 21:47:26,187 INFO output_model_file_prefix: resnet18_224x224_nv12

2024-04-02 21:47:26,188 INFO ############# input_parameters info #############

2024-04-02 21:47:26,188 INFO ------------------------------------------

2024-04-02 21:47:26,188 INFO ---------input info : input ---------

2024-04-02 21:47:26,189 INFO input_name : input

2024-04-02 21:47:26,189 INFO input_type_rt : nv12

2024-04-02 21:47:26,189 INFO input_space&range : regular

2024-04-02 21:47:26,189 INFO input_layout_rt : None

2024-04-02 21:47:26,190 INFO input_type_train : rgb

2024-04-02 21:47:26,190 INFO input_layout_train : NCHW

2024-04-02 21:47:26,190 INFO norm_type : data_mean_and_scale

2024-04-02 21:47:26,191 INFO input_shape : 1x3x224x224

2024-04-02 21:47:26,191 INFO mean_value : 123.675,116.28,103.53,

2024-04-02 21:47:26,191 INFO scale_value : 0.0171248,0.017507,0.0174292,

2024-04-02 21:47:26,192 INFO cal_data_dir : /open_explorer/ddk/samples/ai_toolchain/horizon_model_convert_sample/03_classification/10_model_convert/mapper/calibration_data_bgr_f32

2024-04-02 21:47:26,192 INFO ---------input info : input end -------

2024-04-02 21:47:26,192 INFO ------------------------------------------

2024-04-02 21:47:26,192 INFO ############# calibration_parameters info #############

2024-04-02 21:47:26,193 INFO preprocess_on : False

2024-04-02 21:47:26,193 INFO calibration_type: : kl

2024-04-02 21:47:26,193 INFO cal_data_type : N/A

2024-04-02 21:47:26,194 INFO ############# compiler_parameters info #############

2024-04-02 21:47:26,194 INFO hbdk_pass_through_params: --fast --O3

2024-04-02 21:47:26,194 INFO input-source : {'input': 'pyramid', '_default_value': 'ddr'}

2024-04-02 21:47:26,226 INFO Convert to runtime bin file sucessfully!

2024-04-02 21:47:26,226 INFO End Model Convert

[root@1e1a1a7e24f4 mapper]#

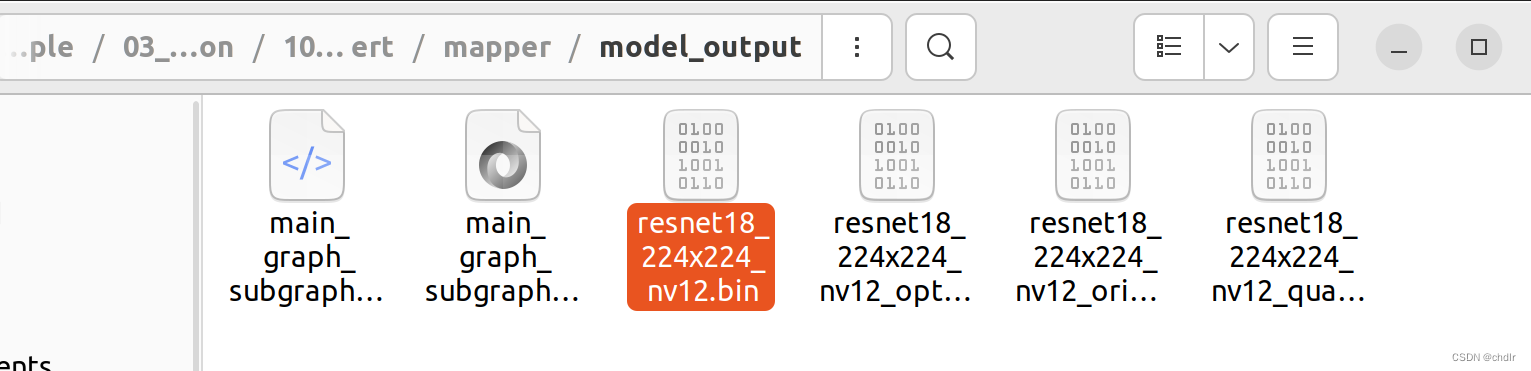

编译成功后,会在 model_output 路径下生成最终的模型文件 resnet18_224x224_nv12.bin

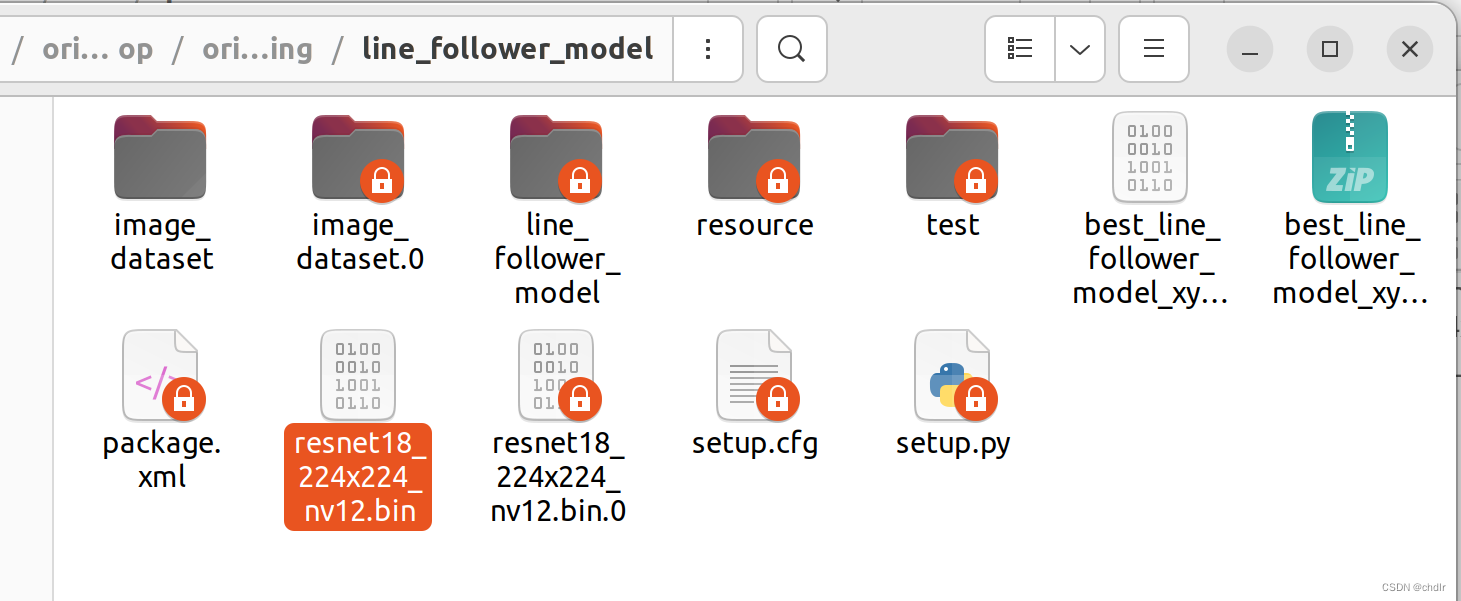

拷贝模型文件 resnet18_224x224_nv12.bin 到 line_follower_model 功能包里,以备后续部署使用。

模型部署

将编译生成的定点模型 resnet18_224x224_nv12.bin,拷贝到OriginCar端 line_follower_perception 功能包下的 model 文件夹中,替换原有的模型,并且在OriginCar端重新编译工作空间。

scp -r ./resnet18_224x224_nv12.bin [email protected]:/root/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception/model/

编译完成后,就可以通过以下命令部署模型,其中参数 model_path 和 model_name 指定模型的路径和名称:

cd /root/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception/

ros2 run line_follower_perception line_follower_perception --ros-args -p model_path:=model/resnet18_224x224_nv12.bin -p model_name:=resnet18_224x224_nv12

命令执行过程如下:

root@ubuntu:~/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception# ros2 run line_follower_perception line_follower_perception --ros-args -p model_path:=model/resnet18_224x224_nv12.bin -p model_name:=resnet18_224x224_nv12

[INFO] [1712122458.232674628] [dnn]: Node init.

[INFO] [1712122458.233179215] [LineFollowerPerceptionNode]: path:model/resnet18_224x224_nv12.bin

[INFO] [1712122458.233256001] [LineFollowerPerceptionNode]: name:resnet18_224x224_nv12

[INFO] [1712122458.233340036] [dnn]: Model init.

[EasyDNN]: EasyDNN version = 1.6.1_(1.18.6 DNN)

[BPU_PLAT]BPU Platform Version(1.3.3)!

[HBRT] set log level as 0. version = 3.15.25.0

[DNN] Runtime version = 1.18.6_(3.15.25 HBRT)

[A][DNN][packed_model.cpp:234][Model](2024-04-03,13:34:18.775.957) [HorizonRT] The model builder version = 1.9.9

[INFO] [1712122458.918322553] [dnn]: The model input 0 width is 224 and height is 224

[INFO] [1712122458.918465125] [dnn]: Task init.

[INFO] [1712122458.920699164] [dnn]: Set task_num [4]

启动相机

先将OriginCar放置到巡线的场景中。

通过如下命令,启动零拷贝模式下的摄像头驱动,加速内部的图像处理效率:

export RMW_IMPLEMENTATION=rmw_cyclonedds_cpp

export CYCLONEDDS_URI='<CycloneDDS><Domain><General><NetworkInterfaceAddress>wlan0</NetworkInterfaceAddress></General></Domain></CycloneDDS>'

ros2 launch origincar_bringup usb_websocket_display.launch.py

相机启动成功后,就可以在巡线终端中看到动态识别的路径线位置了:

启动机器人

启动OriginCar底盘,机器人开始自主寻线运动:

ros2 launch origincar_base origincar_bringup.launch.py 智能推荐

java 实现 数据库备份_java数据备份-程序员宅基地

文章浏览阅读1k次。数据库备份的方法第一种:使用mysqldump结合exec函数进行数据库备份操作。第二种:使用php+mysql+header函数进行数据库备份和下载操作。下面 java 实现数据库备份的方法就是第一种首先我们得知道一些mysqldump的数据库备份语句备份一个数据库格式:mysqldump -h主机名 -P端口 -u用户名 -p密码 --database 数据库名 ..._java数据备份

window10_ffmpeg调试环境搭建-编译64位_win10如何使用mingw64编译ffmpeg-程序员宅基地

文章浏览阅读3.4k次,点赞2次,收藏14次。window10_ffmpeg调试环境搭建_win10如何使用mingw64编译ffmpeg

《考试脑科学》_考试脑科学pdf百度网盘下载-程序员宅基地

文章浏览阅读6.3k次,点赞9次,收藏14次。给大家推荐《考试脑科学》这本书。作者介绍:池谷裕二,日本东京大学药学系研究科教授,脑科学研究者。1970年生于日本静冈县,1998年取得日本东京大学药学博士学位,2002年起担任美国哥伦比亚大学客座研究员。专业为神经科学与药理学,研究领域为人脑海马体与大脑皮质层的可塑性。现为东京大学药学研究所教授,同时担任日本脑信息通信融合研究中心研究主任,日本药理学会学术评议员、ERATO人脑与AI融合项目负责人。2008年获得日本文部大臣表彰青年科学家奖,2013年获得日本学士院学术奖励奖。这本书作者用非常通俗易懂_考试脑科学pdf百度网盘下载

今天给大家介绍一下华为智选手机与华为手机的区别_华为智选手机和华为手机的区别-程序员宅基地

文章浏览阅读1.4k次。其中,成都鼎桥通信技术有限公司是一家专业从事移动通讯终端产品研发和生产的高科技企业,其发布的TD Tech M40也是华为智选手机系列中的重要代表之一。华为智选手机是由华为品牌方与其他公司合作推出的手机产品,虽然其机身上没有“华为”标识,但是其品质和技术水平都是由华为来保证的。总之,华为智选手机是由华为品牌方和其他公司合作推出的手机产品,虽然外观上没有“华为”标识,但其品质和技术水平都是由华为来保证的。华为智选手机采用了多种处理器品牌,以满足不同用户的需求,同时也可以享受到华为全国联保的服务。_华为智选手机和华为手机的区别

c++求n个数中的最大值_n个数中最大的那个数在哪里?输出其位置,若有多个最大数则都要输出。-程序员宅基地

文章浏览阅读7.6k次,点赞6次,收藏17次。目录题目描述输入输出代码打擂法数组排序任意输入n个整数,把它们的最大值求出来.输入只有一行,包括一个整数n(1_n个数中最大的那个数在哪里?输出其位置,若有多个最大数则都要输出。

python overflowerror_python – 是否真的引发了OverflowError?-程序员宅基地

文章浏览阅读520次。Python 2.7.2 (v2.7.2:8527427914a2, Jun 11 2011, 15:22:34)[GCC 4.2.1 (Apple Inc. build 5666) (dot 3)] on darwinType "help", "copyright", "credits" or "license" for more information.>>> float(1...

随便推点

Android面试官,面试时总喜欢挖基础坑,整理了26道面试题牢固你基础!(3)-程序员宅基地

文章浏览阅读795次,点赞20次,收藏15次。AIDL是使用bind机制来工作。java原生参数Stringparcelablelist & map 元素 需要支持AIDL其实Android开发的知识点就那么多,面试问来问去还是那么点东西。所以面试没有其他的诀窍,只看你对这些知识点准备的充分程度。so,出去面试时先看看自己复习到了哪个阶段就好。下图是我进阶学习所积累的历年腾讯、头条、阿里、美团、字节跳动等公司2019-2021年的高频面试题,博主还把这些技术点整理成了视频和PDF(实际上比预期多花了不少精力),包含知识脉络 + 诸多细节。

机器学习-数学基础02补充_李孟_新浪博客-程序员宅基地

文章浏览阅读248次。承接:数据基础02

短沟道效应 & 窄宽度效应 short channel effects & narrow width effects-程序员宅基地

文章浏览阅读2.8w次,点赞14次,收藏88次。文章目录1. 概念:Narrow Width Effect: 窄宽度效应Short Channel effects:短沟道效应阈值电压 (Threshold voltage)2. 阈值电压与沟道长和沟道宽的关系:Narrow channel 窄沟的分析Short channel 短沟的分析1. 概念:Narrow Width Effect: 窄宽度效应在CMOS器件工艺中,器件的阈值电压Vth 随着沟道宽度的变窄而增大,即窄宽度效应;目前,由于浅沟道隔离工艺的应用,器件的阈值电压 Vth 随着沟道宽度_短沟道效应

小米组织架构再调整,王川调职,雷军自任中国区总裁_小米更换硬件负责人-程序员宅基地

文章浏览阅读335次。5月17日,小米集团再发组织架构调整及任命通知。新通知主要内容为前小米中国区负责人王川调职,雷军自任中国区总裁。小米频繁调整背后,雷军有些着急了中国区手机业务持续下滑。根据IDC最近公布的数据,小米一季度全球出货量为2750万台,相比去年同期的2780万台,小幅下降。参考Canalys、Counterpoint的统计,小米一季度出货量也都录得1%的同比下滑。作为对比,IDC数据显示,华为同期出..._小米更换硬件负责人

JAVA基础学习大全(笔记)_java学习笔记word-程序员宅基地

文章浏览阅读9.1w次。JAVASE和JAVAEE的区别JDK的安装路径[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-perPRPgq-1608641067105)(C:\Users\王东梁\AppData\Roaming\Typora\typora-user-images\image-20201222001641906.png)]卸载和安装JDK[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-SYnXvbAn-1608641067107)(C:\Users_java学习笔记word

vue-echarts饼图/柱状图点击事件_echarts 饼图点击事件-程序员宅基地

文章浏览阅读7.8k次,点赞2次,收藏17次。在实际的项目开发中,我们通常会用到Echarts来对数据进行展示,有时候需要用到Echarts的点击事件,增加系统的交互性,一般是点击Echarts图像的具体项来跳转路由并携带参数,当然也可以根据具体需求来做其他的业务逻辑。下面就Echarts图表的点击事件进行实现,文章省略了Echarts图的html代码,构建过程,option,适用的表格有饼图、柱状图、折线图。如果在实现过程中,遇到困难或者有说明好的建议,欢迎留言提问。_echarts 饼图点击事件