深度学习区分不同种类的图片_google/vit-base-patch16-224-in21k-程序员宅基地

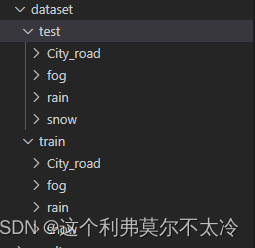

数据集格式

之前利用resnet从一开始训练,效果比较差,后来利用谷歌的模型进行微调达到了很好的效果

训练代码如下:

from datasets import load_dataset

# /home/huhao/TensorFlow2.0_ResNet/dataset

# /home/huhao/dataset

import numpy as np

from datasets import load_metric

scene = load_dataset("/home/huhao/TensorFlow2.0_ResNet/dataset")

dataset = scene['train']

scene = dataset.train_test_split(test_size=0.2)

labels = scene["train"].features["label"].names

label2id, id2label = dict(), dict()

for i, label in enumerate(labels):

label2id[label] = str(i)

id2label[str(i)] = label

from transformers import AutoFeatureExtractor

# google/vit-base-patch16-224-in21k

feature_extractor = AutoFeatureExtractor.from_pretrained("google/vit-base-patch16-224-in21k")

from torchvision.transforms import RandomResizedCrop, Compose, Normalize, ToTensor

normalize = Normalize(mean=feature_extractor.image_mean, std=feature_extractor.image_std)

_transforms = Compose([RandomResizedCrop(feature_extractor.size), ToTensor(), normalize])

def transforms(examples):

examples["pixel_values"] = [_transforms(img.convert("RGB")) for img in examples["image"]]

del examples["image"]

return examples

scene = scene.with_transform(transforms)

from transformers import DefaultDataCollator

data_collator = DefaultDataCollator()

from transformers import AutoModelForImageClassification, TrainingArguments, Trainer

def compute_metric(eval_pred):

metric = load_metric("accuracy")

logits,labels = eval_pred

print(logits,labels)

print(len(logits),len(labels))

predictions = np.argmax(logits,axis=-1)

print(len(predictions))

print('predictions')

print(predictions)

return metric.compute(predictions = predictions,references = labels)

model = AutoModelForImageClassification.from_pretrained(

"google/vit-base-patch16-224-in21k",

num_labels=len(labels),

id2label=id2label,

label2id=label2id,

)

training_args = TrainingArguments(

output_dir="./results",

overwrite_output_dir = 'True',

per_device_train_batch_size=16,

evaluation_strategy="steps",

num_train_epochs=4,

save_steps=100,

eval_steps=100,

logging_steps=10,

learning_rate=2e-4,

save_total_limit=2,

remove_unused_columns=False,

load_best_model_at_end=False,

save_strategy='no',

)

trainer = Trainer(

model=model,

args=training_args,

data_collator=data_collator,

train_dataset=scene["train"],

eval_dataset=scene["test"],

tokenizer=feature_extractor,

compute_metrics=compute_metric,

)

trainer.train()

trainer.evaluate()

trainer.save_model('/home/huhao/script/model')测试代码如下

from transformers import AutoFeatureExtractor, AutoModelForImageClassification

extractor = AutoFeatureExtractor.from_pretrained("HaoHu/vit-base-patch16-224-in21k-classify-4scence")

model = AutoModelForImageClassification.from_pretrained("HaoHu/vit-base-patch16-224-in21k-classify-4scence")

# 我已经把训练好的模型上传到网上,这里下载即可使用

from datasets import load_dataset

# /home/huhao/TensorFlow2.0_ResNet/dataset

# /home/huhao/dataset

import numpy as np

from datasets import load_metric

# 这个是数据集加载的路径

scene = load_dataset("/home/huhao/script/dataset")

dataset = scene['train']

scene = dataset.train_test_split(test_size=0.2)

labels = scene["train"].features["label"].names

label2id, id2label = dict(), dict()

for i, label in enumerate(labels):

label2id[label] = str(i)

id2label[str(i)] = label

from transformers import AutoFeatureExtractor

# google/vit-base-patch16-224-in21k

feature_extractor = AutoFeatureExtractor.from_pretrained("HaoHu/vit-base-patch16-224-in21k-classify-4scence")

from torchvision.transforms import RandomResizedCrop, Compose, Normalize, ToTensor

normalize = Normalize(mean=feature_extractor.image_mean, std=feature_extractor.image_std)

_transforms = Compose([RandomResizedCrop(feature_extractor.size), ToTensor(), normalize])

def transforms(examples):

examples["pixel_values"] = [_transforms(img.convert("RGB")) for img in examples["image"]]

del examples["image"]

return examples

scene = scene.with_transform(transforms)

from transformers import DefaultDataCollator

data_collator = DefaultDataCollator()

from transformers import AutoModelForImageClassification, TrainingArguments, Trainer

training_args = TrainingArguments(

output_dir="./results",

overwrite_output_dir = 'True',

per_device_train_batch_size=16,

evaluation_strategy="steps",

num_train_epochs=4,

save_steps=100,

eval_steps=100,

logging_steps=10,

learning_rate=2e-4,

save_total_limit=2,

remove_unused_columns=False,

load_best_model_at_end=False,

save_strategy='no',

)

model = AutoModelForImageClassification.from_pretrained(

"HaoHu/vit-base-patch16-224-in21k-classify-4scence",

num_labels=len(labels),

id2label=id2label,

label2id=label2id,

)

def compute_metric(eval_pred):

metric = load_metric("f1")

logits,labels = eval_pred

print(len(logits),len(labels))

predictions = np.argmax(logits,axis=-1)

print('对测试集进行评估')

print('labels')

print(labels)

print('predictions')

print(predictions)

return metric.compute(predictions = predictions,references = labels,average='macro')

trainer = Trainer(

model=model,

args=training_args,

data_collator=data_collator,

eval_dataset=scene["test"],

tokenizer=feature_extractor,

compute_metrics=compute_metric,

)

compute_metrics = trainer.evaluate()

# {'eval_loss': 0.04495017230510712, 'eval_accuracy': 0.9943181818181818, 'eval_runtime': 30.8715, 'eval_samples_per_second': 11.402, 'eval_steps_per_second': 1.425}

print('输出最后的结果eval_f1:')

print(compute_metrics['eval_f1'])

from doctest import Example

from transformers import AutoFeatureExtractor, AutoModelForImageClassification, ImageClassificationPipeline

import os

extractor = AutoFeatureExtractor.from_pretrained("HaoHu/vit-base-patch16-224-in21k-classify-4scence")

model = AutoModelForImageClassification.from_pretrained("HaoHu/vit-base-patch16-224-in21k-classify-4scence")

from transformers import pipeline

#generator = ImageClassificationPipeline(model=model, tokenizer=extractor)

vision_classifier = pipeline(task="image-classification",model = model,feature_extractor = extractor)

result_dict = {'City_road':0,'fog':1,'rain':2,'snow':3}

val_path = '/home/huhao/script/val/'

all_img = os.listdir(val_path)

for img in all_img:

tmp_score = 0

end_label = ''

img_path = os.path.join(val_path,img)

score_list = vision_classifier(img_path)

for sample in score_list:

score = sample['score']

label = sample['label']

if tmp_score < score:

tmp_score = score

end_label = label

print(result_dict[end_label])

智能推荐

oracle 12c 集群安装后的检查_12c查看crs状态-程序员宅基地

文章浏览阅读1.6k次。安装配置gi、安装数据库软件、dbca建库见下:http://blog.csdn.net/kadwf123/article/details/784299611、检查集群节点及状态:[root@rac2 ~]# olsnodes -srac1 Activerac2 Activerac3 Activerac4 Active[root@rac2 ~]_12c查看crs状态

解决jupyter notebook无法找到虚拟环境的问题_jupyter没有pytorch环境-程序员宅基地

文章浏览阅读1.3w次,点赞45次,收藏99次。我个人用的是anaconda3的一个python集成环境,自带jupyter notebook,但在我打开jupyter notebook界面后,却找不到对应的虚拟环境,原来是jupyter notebook只是通用于下载anaconda时自带的环境,其他环境要想使用必须手动下载一些库:1.首先进入到自己创建的虚拟环境(pytorch是虚拟环境的名字)activate pytorch2.在该环境下下载这个库conda install ipykernelconda install nb__jupyter没有pytorch环境

国内安装scoop的保姆教程_scoop-cn-程序员宅基地

文章浏览阅读5.2k次,点赞19次,收藏28次。选择scoop纯属意外,也是无奈,因为电脑用户被锁了管理员权限,所有exe安装程序都无法安装,只可以用绿色软件,最后被我发现scoop,省去了到处下载XXX绿色版的烦恼,当然scoop里需要管理员权限的软件也跟我无缘了(譬如everything)。推荐添加dorado这个bucket镜像,里面很多中文软件,但是部分国外的软件下载地址在github,可能无法下载。以上两个是官方bucket的国内镜像,所有软件建议优先从这里下载。上面可以看到很多bucket以及软件数。如果官网登陆不了可以试一下以下方式。_scoop-cn

Element ui colorpicker在Vue中的使用_vue el-color-picker-程序员宅基地

文章浏览阅读4.5k次,点赞2次,收藏3次。首先要有一个color-picker组件 <el-color-picker v-model="headcolor"></el-color-picker>在data里面data() { return {headcolor: ’ #278add ’ //这里可以选择一个默认的颜色} }然后在你想要改变颜色的地方用v-bind绑定就好了,例如:这里的:sty..._vue el-color-picker

迅为iTOP-4412精英版之烧写内核移植后的镜像_exynos 4412 刷机-程序员宅基地

文章浏览阅读640次。基于芯片日益增长的问题,所以内核开发者们引入了新的方法,就是在内核中只保留函数,而数据则不包含,由用户(应用程序员)自己把数据按照规定的格式编写,并放在约定的地方,为了不占用过多的内存,还要求数据以根精简的方式编写。boot启动时,传参给内核,告诉内核设备树文件和kernel的位置,内核启动时根据地址去找到设备树文件,再利用专用的编译器去反编译dtb文件,将dtb还原成数据结构,以供驱动的函数去调用。firmware是三星的一个固件的设备信息,因为找不到固件,所以内核启动不成功。_exynos 4412 刷机

Linux系统配置jdk_linux配置jdk-程序员宅基地

文章浏览阅读2w次,点赞24次,收藏42次。Linux系统配置jdkLinux学习教程,Linux入门教程(超详细)_linux配置jdk

随便推点

matlab(4):特殊符号的输入_matlab微米怎么输入-程序员宅基地

文章浏览阅读3.3k次,点赞5次,收藏19次。xlabel('\delta');ylabel('AUC');具体符号的对照表参照下图:_matlab微米怎么输入

C语言程序设计-文件(打开与关闭、顺序、二进制读写)-程序员宅基地

文章浏览阅读119次。顺序读写指的是按照文件中数据的顺序进行读取或写入。对于文本文件,可以使用fgets、fputs、fscanf、fprintf等函数进行顺序读写。在C语言中,对文件的操作通常涉及文件的打开、读写以及关闭。文件的打开使用fopen函数,而关闭则使用fclose函数。在C语言中,可以使用fread和fwrite函数进行二进制读写。 Biaoge 于2024-03-09 23:51发布 阅读量:7 ️文章类型:【 C语言程序设计 】在C语言中,用于打开文件的函数是____,用于关闭文件的函数是____。

Touchdesigner自学笔记之三_touchdesigner怎么让一个模型跟着鼠标移动-程序员宅基地

文章浏览阅读3.4k次,点赞2次,收藏13次。跟随鼠标移动的粒子以grid(SOP)为partical(SOP)的资源模板,调整后连接【Geo组合+point spirit(MAT)】,在连接【feedback组合】适当调整。影响粒子动态的节点【metaball(SOP)+force(SOP)】添加mouse in(CHOP)鼠标位置到metaball的坐标,实现鼠标影响。..._touchdesigner怎么让一个模型跟着鼠标移动

【附源码】基于java的校园停车场管理系统的设计与实现61m0e9计算机毕设SSM_基于java技术的停车场管理系统实现与设计-程序员宅基地

文章浏览阅读178次。项目运行环境配置:Jdk1.8 + Tomcat7.0 + Mysql + HBuilderX(Webstorm也行)+ Eclispe(IntelliJ IDEA,Eclispe,MyEclispe,Sts都支持)。项目技术:Springboot + mybatis + Maven +mysql5.7或8.0+html+css+js等等组成,B/S模式 + Maven管理等等。环境需要1.运行环境:最好是java jdk 1.8,我们在这个平台上运行的。其他版本理论上也可以。_基于java技术的停车场管理系统实现与设计

Android系统播放器MediaPlayer源码分析_android多媒体播放源码分析 时序图-程序员宅基地

文章浏览阅读3.5k次。前言对于MediaPlayer播放器的源码分析内容相对来说比较多,会从Java-&amp;gt;Jni-&amp;gt;C/C++慢慢分析,后面会慢慢更新。另外,博客只作为自己学习记录的一种方式,对于其他的不过多的评论。MediaPlayerDemopublic class MainActivity extends AppCompatActivity implements SurfaceHolder.Cal..._android多媒体播放源码分析 时序图

java 数据结构与算法 ——快速排序法-程序员宅基地

文章浏览阅读2.4k次,点赞41次,收藏13次。java 数据结构与算法 ——快速排序法_快速排序法